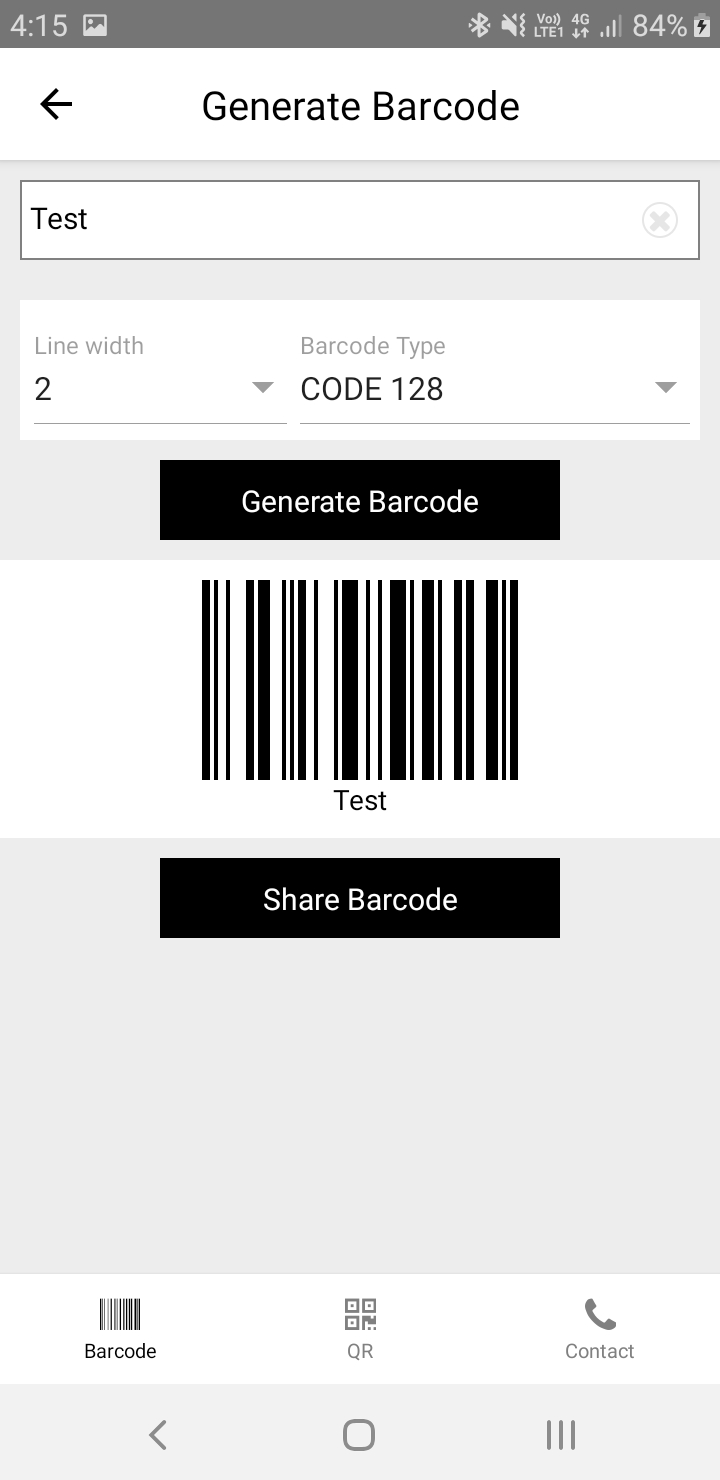

It will ask you to add some configurations to the adle files.įirst, add the google-services plugin as a dependency inside of your android/adle file:Īwait ml(). You should place this file in the android/app directory.Īfter adding the file, proceed to the next step. Once you enter the package name and proceed to the next step, you can download the google-services.json file. You can find the package name in the AndroidManifest.xml which is located in android/app/src/main/. You will need the package name of the application to register the application. Now, click on the Android icon to add an Android app to the Firebase project. Once you create a new project, you’ll see the dashboard. Head to the Firebase console and sign in to your account. To learn more about multidex, view the official Android documentation. Use this documentation to enable multidexing. Once you reach this limit, you will start to see the following error while attempting to build your Android application.Įxecution failed for task ':app:mergeDexDebug'. React Native Drivers License Scanner Mobile Data Capture for React Native Get in touch Key Requirements Mobile Platform Android 5.0 (API Level 21) and higher iOS 11 or higher Devices Rear-facing camera with autofocus Architecture Android: armeabi-v7, arm64-v8a, x86, x8664 iOS: arm64, x8664 Development Tool Node. You can install these packages in advance or while going through the article.Īs you add more native dependencies to your project, it may bump you over the 64K method limit on the Android build system. Make sure you’re following the React Native CLI Quickstart, not the Expo CLI Quickstart. You can follow this documentation to set up the environment and create a new React app. IMPORTANT - We will not be using Expo in our project. You can take a look at the final code in this GitHub Repository. We’ll be going through these steps in this article: You will need a Firebase project with the Blaze plan enabled to access the Cloud Vision APIs. You will need a basic understanding of React & React Native. In 2014, Google acquired the platform and it is now their flagship offering for app development. It was originally an independent company founded in 2011. Firebaseįirebase is a platform developed by Google for creating mobile and web applications. OCRs work by scanning images and extracting their text as a machine-readable file. Another word for this technology is Optical Character Recognition, or OCR. In this tutorial, we will be building a Non-Expo React Native application to recognize text from an image using Firebase’s ML kit.Ĭloud Vision APIs allows developers to easily integrate vision detection features within applications, including image labeling, face and landmark detection, optical character recognition (OCR), and tagging of explicit content. Tesseract.js is an open source text recognization engine that allows us to extract text from an image.

They can also be used to automate data-entry tasks such as processing credit cards, receipts, and business cards. such as TensorFlow, ReactNative, Linux Bootstrap, Vue, git and React. Firebase ML Kits text recognition APIs can recognize text in any Latin-based character set. python opencv ocr ai deep-learning image-processing.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed